Language: English | 繁體中文

Research Vision 研究主軸

Advanced Computer Vision Lab — Assured Computer Vision: Lean, Autonomous, Broad-Spectrum

As generative AI blurs the boundary between authentic and fabricated media, autonomous systems demand vision that never fails silently, and Earth observation enters a data-rich new era, the bar for deployable visual intelligence keeps rising. ACVLab responds with four interlocking research pillars.

Assured Visual Intelligence ensures that every visual AI output can be trusted — whether detecting DeepFakes under heavy compression, defending against adversarial perturbations, or authenticating media through proactive watermarking — providing the accountability that forensic, medical, and regulatory settings require.

Lean Visual Architectures rethink computation at every level of abstraction: prefix-scan reformulations of exact attention (ELSA), bitstream-level forensics that skip pixel decoding entirely, adaptive quantization that preserves accuracy at ultra-low bit widths (QuantTune/FracQuant), and joint transmission-restoration for bandwidth-constrained satellites — cutting latency, memory, and energy cost for sustainable, real-time deployment.

Autonomous Visual Perception extends vision from 2D images into 3D physical space: material-aware scene reconstruction with hyperspectral unmixing, BEV adversarial defense for self-driving (BFDM), physics-aligned shadow and reflection removal that feeds robust features to downstream robotic pipelines (PhaSR, ReflexSplit), and uncertainty-aware 3D annotation for autonomous driving datasets.

Broad-Spectrum Scientific Sensing pushes perception beyond the visible: universal hyperspectral restoration via vision-language prompts (PromptHSI), real-time CubeSat compressed sensing recognized with the Future Technology Award, hyperspectral pansharpening through sparse spectral representations (S3RNet), and cross-spectral forgery detection that reveals manipulation invisible to RGB analysis.

These pillars do not operate in isolation. Hyperspectral forensics merges trust with spectral sensing. On-satellite real-time inference merges efficiency with broad-spectrum data. BEV adversarial defense merges trust with embodied perception. This cross-pillar synergy is not accidental — it reflects a single underlying conviction: deployment-grade visual intelligence must be simultaneously trustworthy, efficient, embodied, and perceptually complete.

Research Pillars

- Autonomous Visual Perception: PhaSR, ReflexSplit, autonomous driving, tracking, embodied perception, 3D reconstruction

- Assured Visual Intelligence: GRACEv2, UMCL, DDD-Net, DeepFake detection, proactive authentication, trustworthy media analysis

- Broad-Spectrum Scientific Sensing: PromptHSI, S3RNet, CubeSat compressed sensing, remote sensing, satellite imaging

- Lean Visual Architectures: ELSA, QuantTune, FracQuant, bitstream-level inference, CubeSat on-board processing, edge deployment

A short introduction to my research: [PDF] (Latest updated: Oct. 2024)

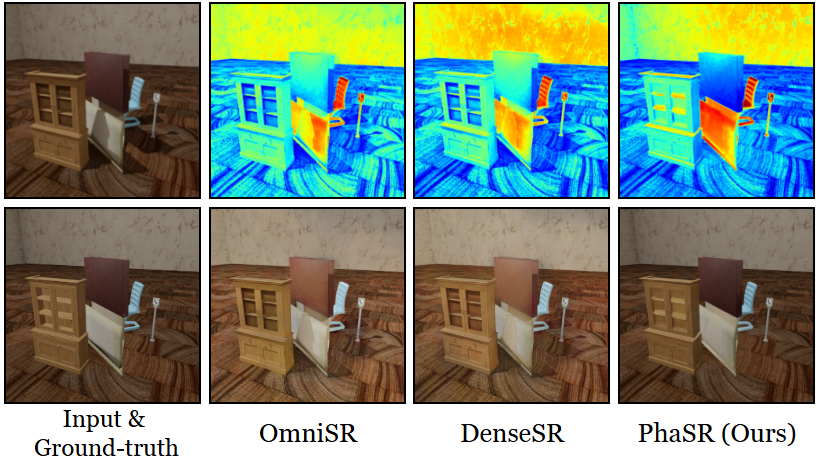

Robust Shadow Removal

PhaSR: Generalized Image Shadow Removal with Physically Aligned Priors

Accepted to IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026.

Shadow removal under complex and multi-source lighting is hindered by the mismatch between physical illumination priors and learned features. PhaSR couples physically aligned normalization with geometry-semantic rectification to deliver robust shadow removal that generalizes beyond traditional single-light settings.

Research Direction. Autonomous Visual Perception / Robust Scene Recovery

Reflection Separation in the Wild

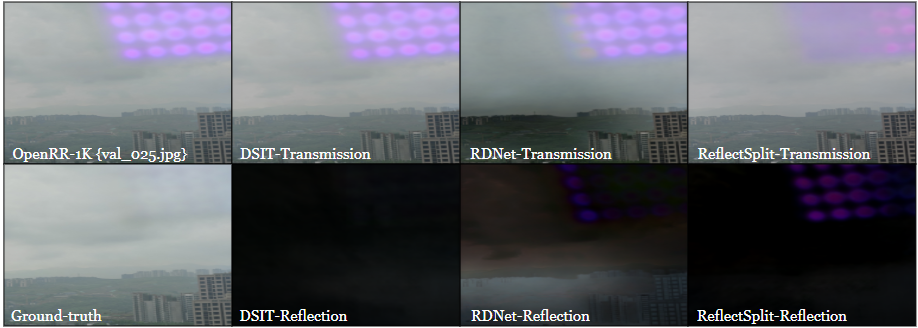

ReflexSplit: Single Image Reflection Separation via Layer Fusion-Separation

Accepted to IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026.

Reflections on glass introduce nonlinear layer mixing that often breaks existing separation networks. ReflexSplit uses dual-stream fusion-separation blocks and curriculum training to achieve robust performance on both synthetic and real-world benchmarks.

Research Direction. Autonomous Visual Perception / Robust Scene Recovery

Efficient AI Inference

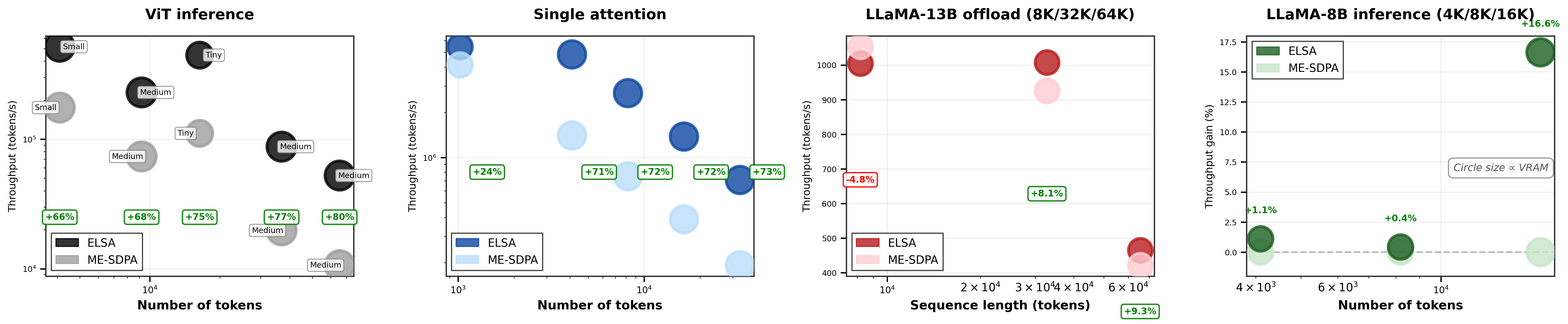

ELSA: Exact Linear-Scan Attention for Fast and Memory-Light Vision Transformers

Accepted to CVPR 2026 Findings (CVPRF).

ELSA reformulates exact softmax attention as a prefix scan over an associative monoid, achieving memory-light inference with provable FP32 stability and no retraining. Implemented in Triton and CUDA C++, it improves deployability on both data-center and edge hardware.

Research Direction. Lean Visual Architectures / Hardware-Agnostic Inference

ArXiv coming soon

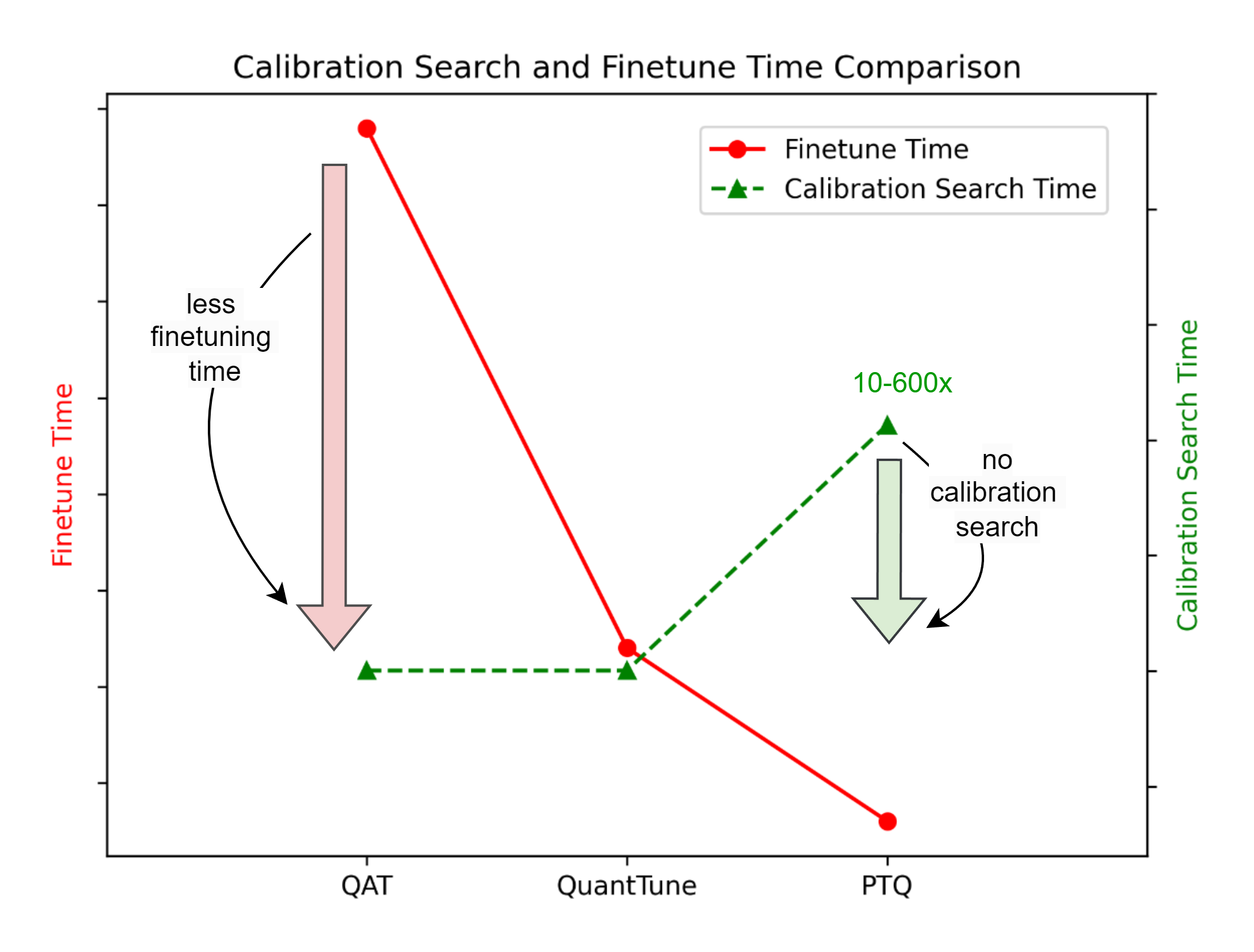

Quantization-Friendly Deployment

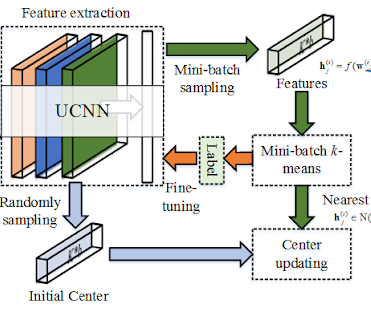

QuantTune: Optimizing Model Quantization with Adaptive Outlier-Driven Fine Tuning

Published in IEEE International Conference on Multimedia Information Processing and Retrieval (MIPR) 2025.

QuantTune addresses outlier-driven dynamic range amplification during Transformer quantization and substantially reduces accuracy loss under low-bit settings. The method requires no extra inference-time hardware complexity and transfers across ViT, BERT, and OPT models.

Research Direction. Lean Visual Architectures / Quantization-Aware Deployment

[arXiv] [IEEE Xplore]

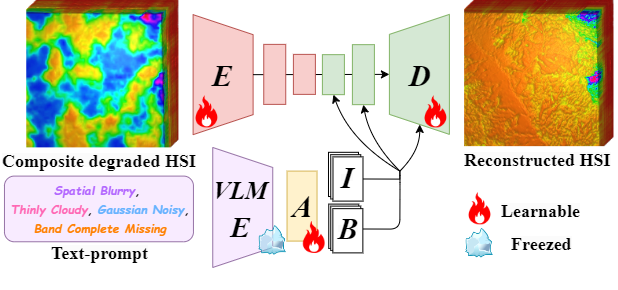

Universal Hyperspectral Restoration

PromptHSI: Universal Hyperspectral Image Restoration with Vision-Language Modulated Frequency Adaptation

Published in IEEE Transactions on Geoscience and Remote Sensing (TGRS), Early Access, Feb. 2026.

PromptHSI is a universal all-in-one framework for hyperspectral restoration that combines frequency-aware modulation with vision-language guided prompt learning. A single model can handle cloud occlusion, blur, noise, and spectral band loss across remote sensing scenarios.

Research Direction. Broad-Spectrum Scientific Sensing / Hyperspectral Restoration

[IEEE Xplore] [arXiv] [GitHub]

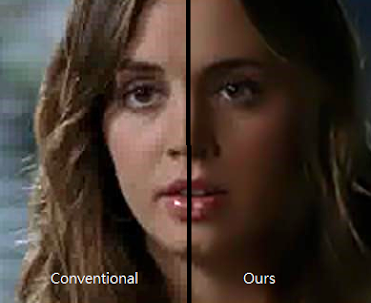

Media Security & DeepFake Robustness

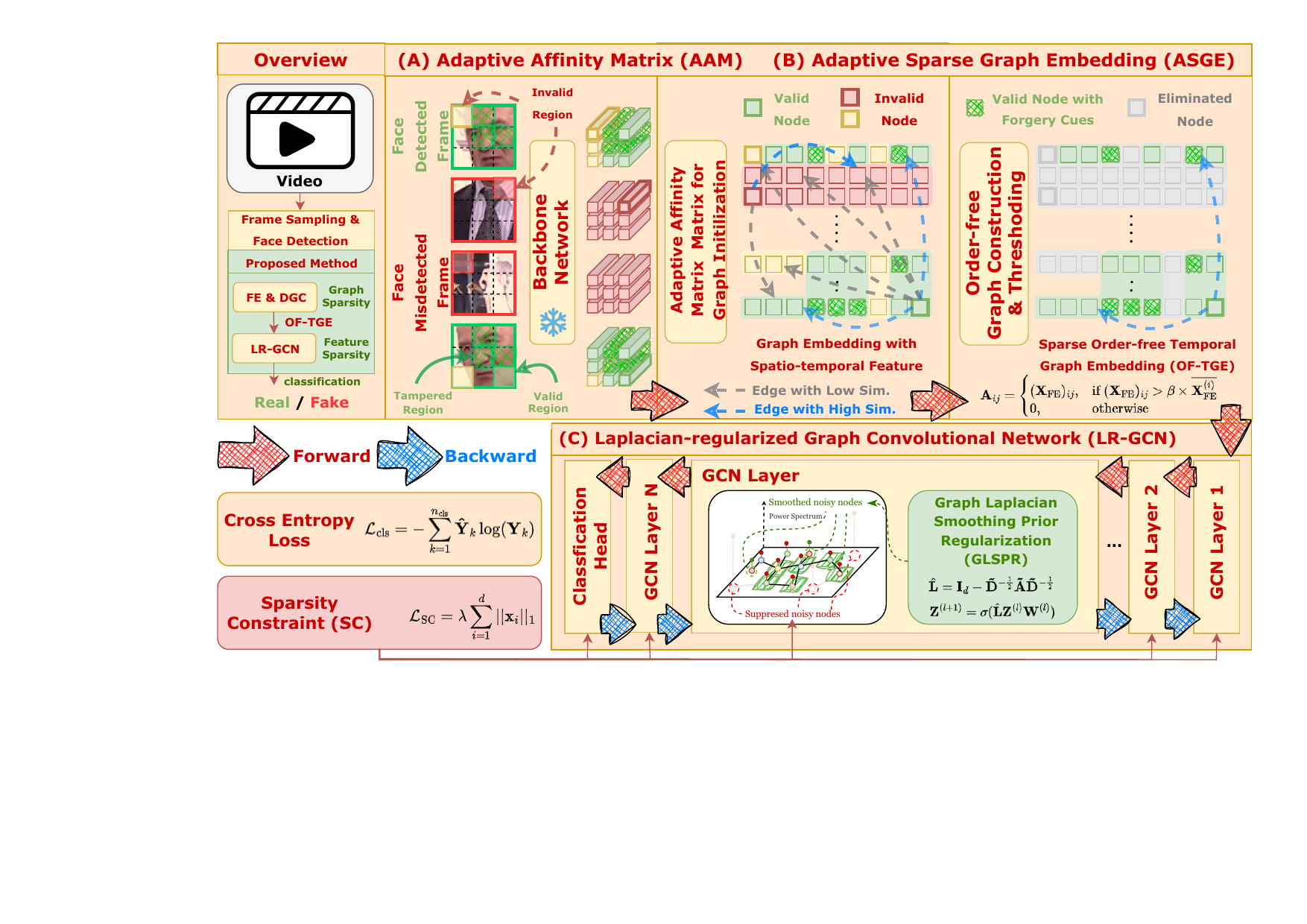

Towards Robust DeepFake Detection under Unstable Face Sequences: Adaptive Sparse Graph Embedding with Order-Free Representation and Explicit Laplacian Spectral Prior

Submitted to IEEE Transactions on Information Forensics and Security (TIFS).

GRACEv2 targets unstable face sequences caused by compression, occlusion, and shuffled or missing frames. By combining order-free temporal graph embedding with an explicit Laplacian spectral prior, it improves robust DeepFake detection under severe real-world disruptions.

Research Direction. Assured Visual Intelligence / Robust DeepFake Detection

[arXiv]

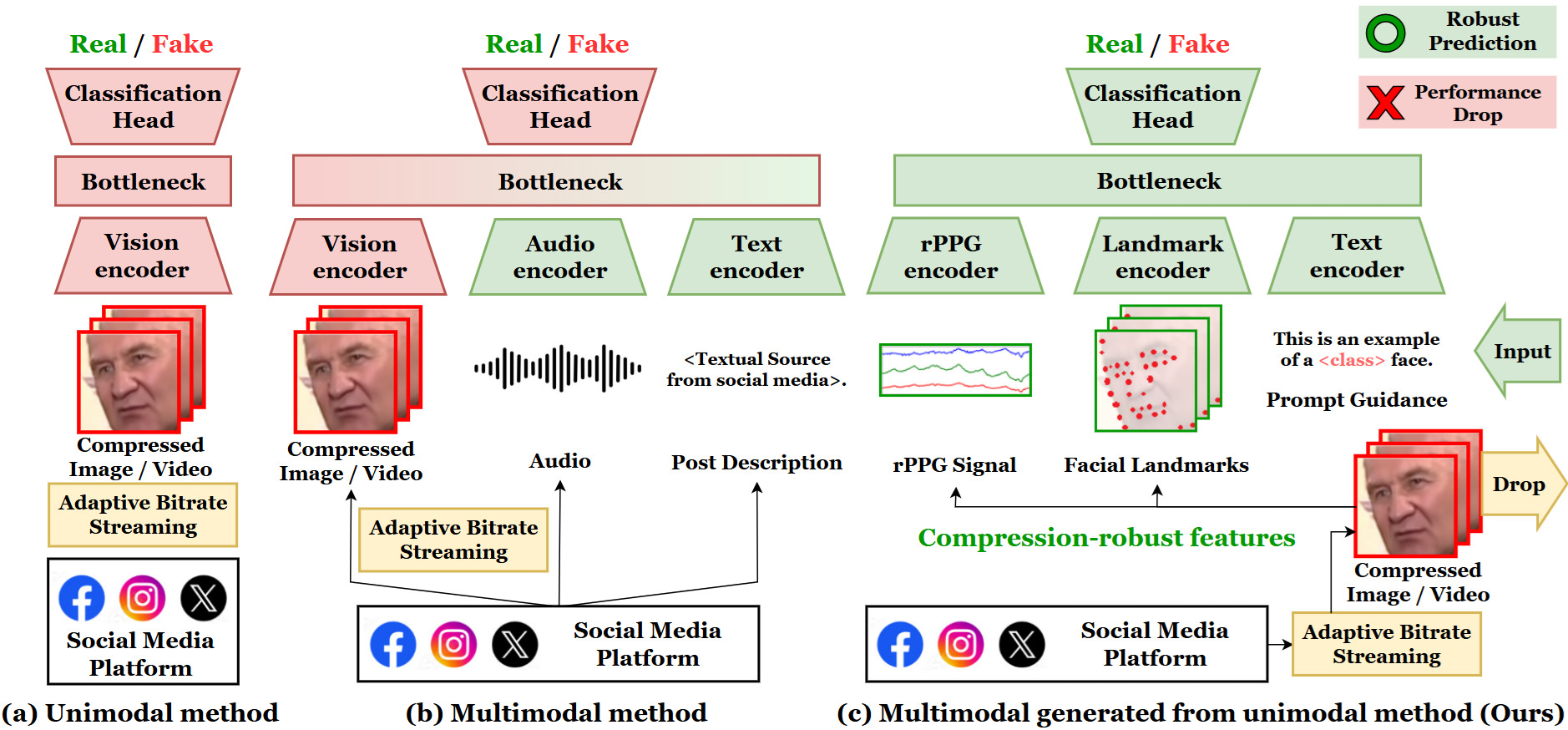

Cross-Compression DeepFake Detection

UMCL: Unimodal-Generated Multimodal Contrastive Learning for Cross-compression-rate Deepfake Detection

Published in International Journal of Computer Vision (IJCV), Jan. 2026.

UMCL synthesizes compression-robust multimodal cues, including rPPG, temporal landmarks, and semantic embeddings, from a single visual input. The framework improves cross-compression DeepFake detection while preserving interpretable feature relationships.

Research Direction. Assured Visual Intelligence / Cross-Compression Forensics

New SOTA SR Model

DRCT: Saving Image Super-Resolution away from Information Bottleneck

Presented at IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2024, NTIRE Workshop [Oral].

Chih-Chung Hsu, Chia-Ming Lee, Yi-Shiuan Chou

Research Direction. Lean Visual Architectures / Efficient Super-Resolution

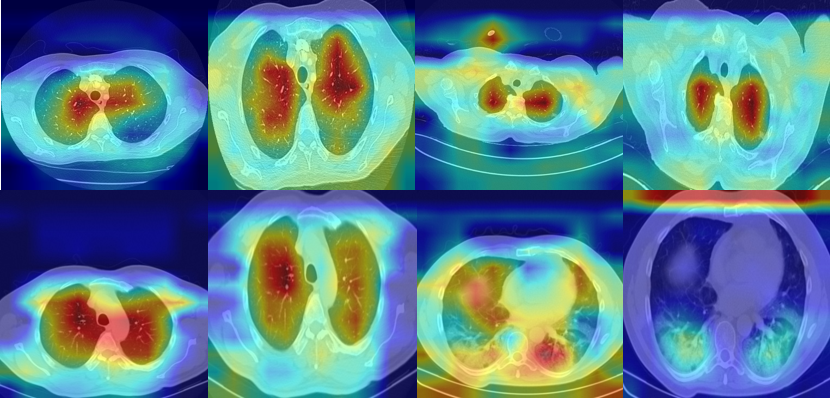

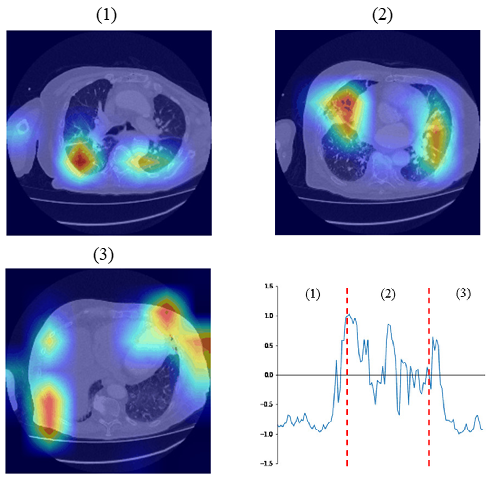

Semi-Supervised Learning in CT Scan Detection

A Closer Look at Spatial-Slice Features for COVID-19 Detection

Presented at IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2024, DEF-AI-MIA Workshop.

Chih-Chung Hsu, Chia-Ming Lee, Yang Fan Chiang, Yi-Shiuan Chou, Chih-Yu Jiang, Shen-Chieh Tai, Chi-Han Tsai

Research Direction. Assured Visual Intelligence / Medical Imaging

[PDF] [arXiv] [GitHub] [Project Page]

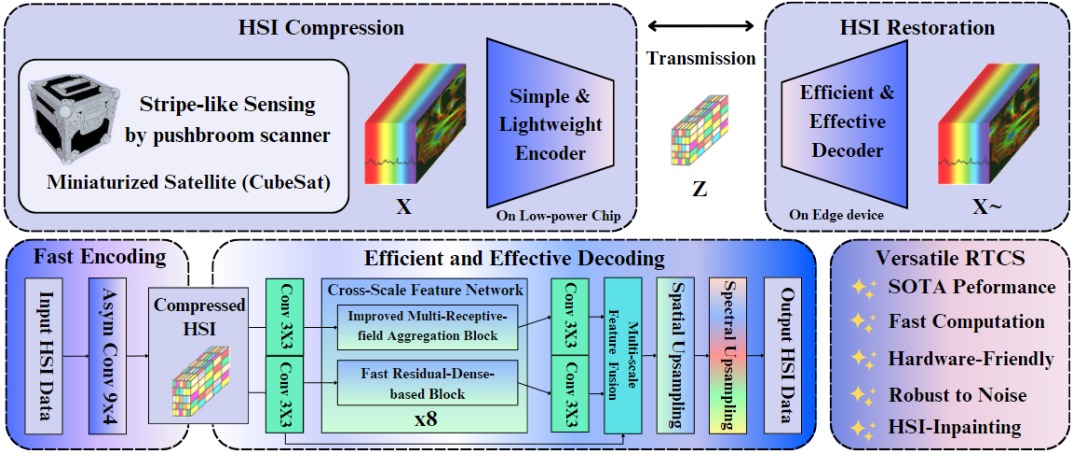

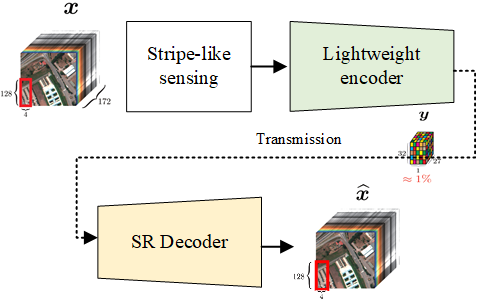

Ultra Fast Hyperspectral Image Compressive Sensing

Real-Time Compressed Sensing for Joint Hyperspectral Image Transmission and Restoration for CubeSat

Published in IEEE Transactions on Geoscience and Remote Sensing (TGRS).

Future Tech Award (未來科技獎)

Chih-Chung Hsu, Chih-Yu Jian, Eng-Shen Tu, Chia-Ming Lee, Guan-Lin Chen

Research Direction. Broad-Spectrum Scientific Sensing × Lean Visual Architectures

[IEEE Xplore] [GitHub]

COVID-19 Symptoms Detection in CT Scan

Selected challenge papers and results

IEEE ECCV Workshop 2022 [1st place in COV19D challenge]

IEEE ICCV Workshop 2021 [3rd place in COV19D challenge]

Our models are designed for noisy, in-the-wild CT scans and remain robust across varying spatial and slice resolutions.

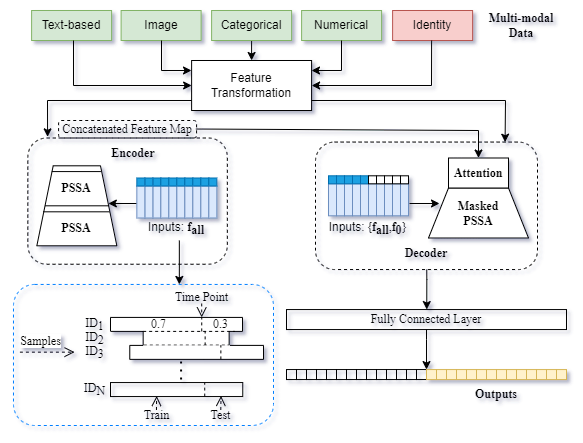

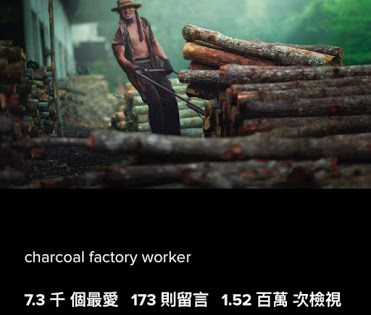

Social Media Prediction as Longitudinal Task (2022-)

A Comprehensive Study of Spatiotemporal Feature Learning for Social Media Popularity Prediction

Published in ACM Multimedia 2022.

C.C. Hsu, P.J. Tsai, T.C. Yeh, and X.U. Hou

We reformulate social media popularity prediction as an identity-preserving longitudinal task and study how multimodal temporal features improve prediction reliability over time.

[PDF]

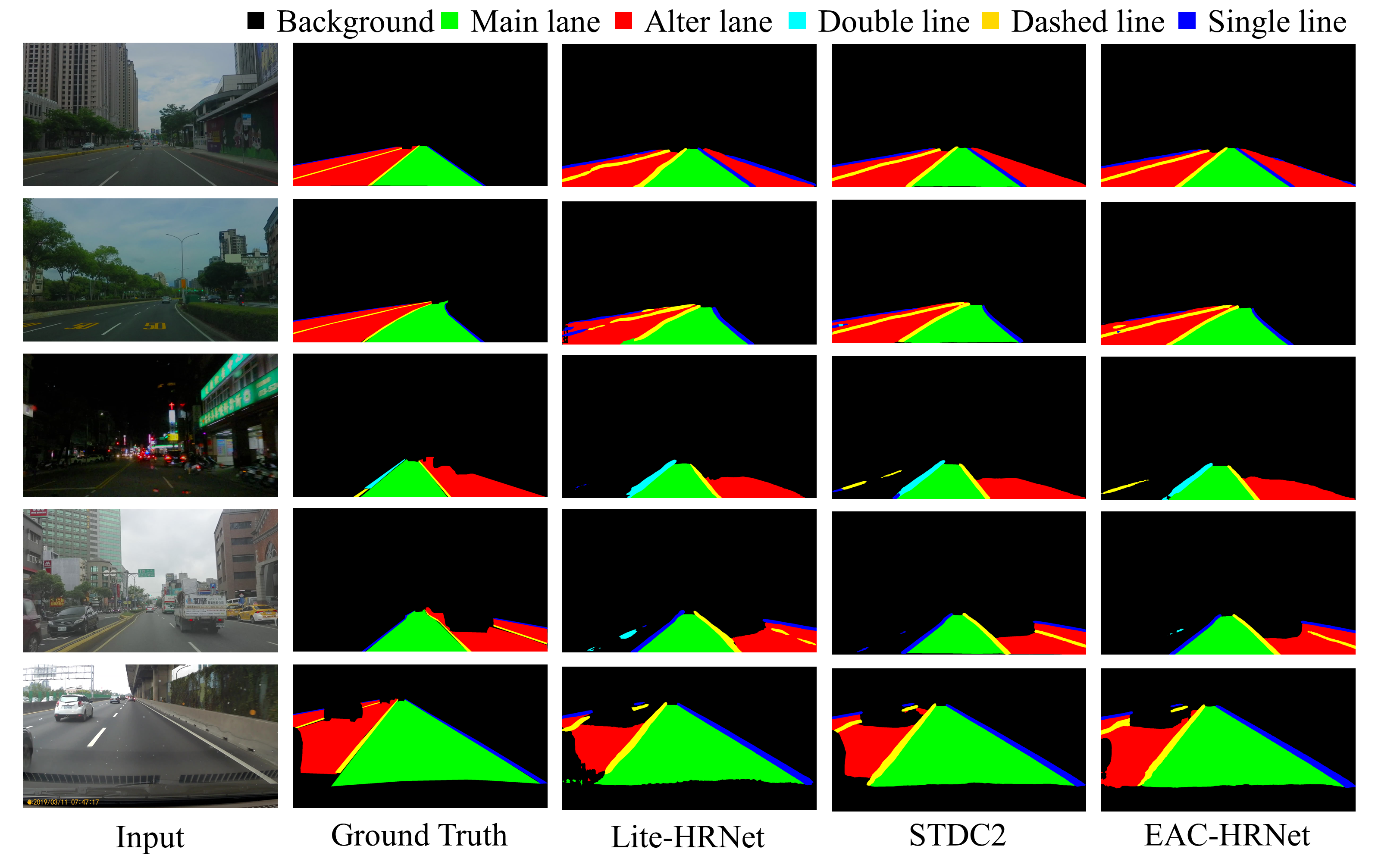

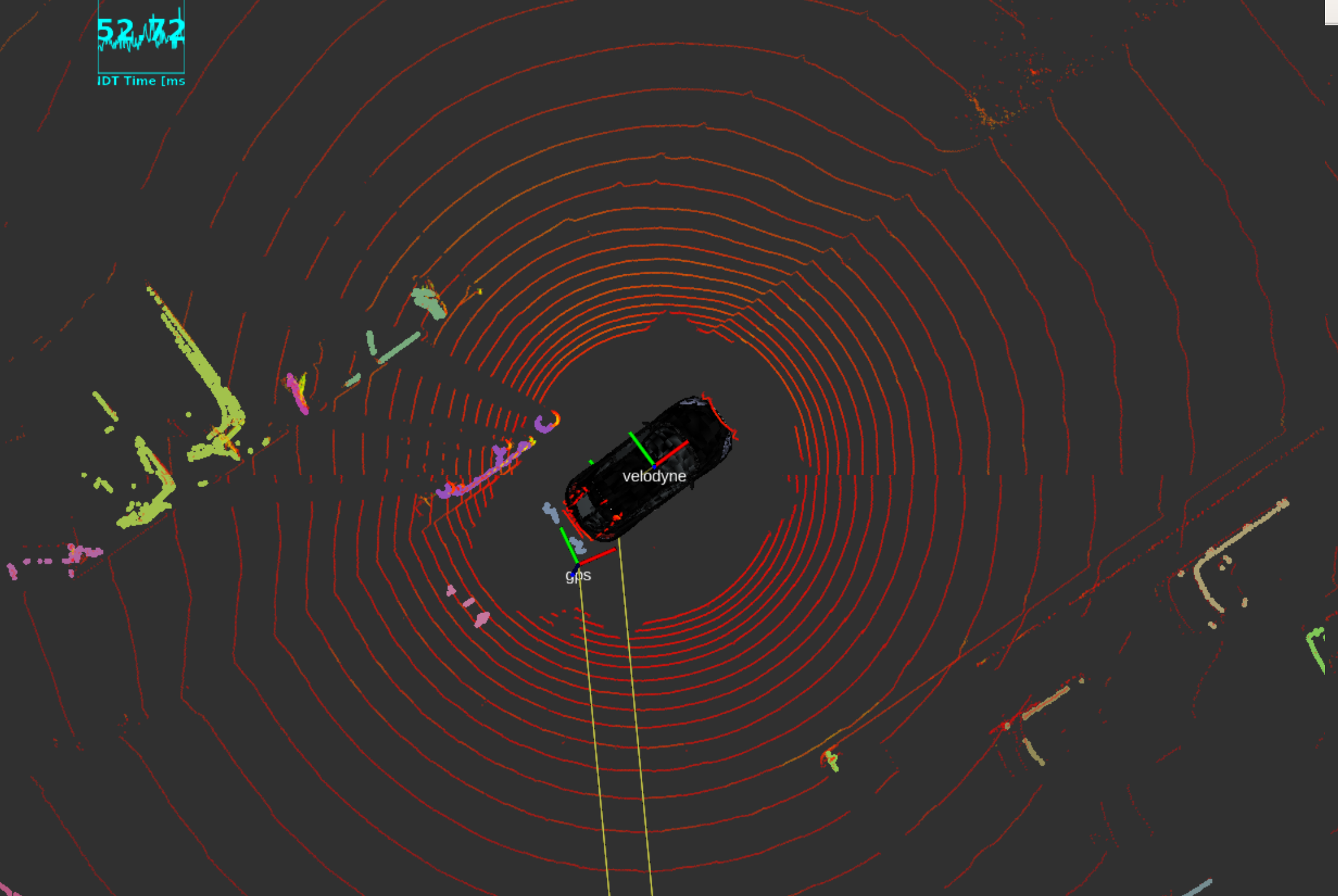

Semantic Segmentation for Autonomous Driving (2021-)

Selected papers for robust and efficient scene understanding

IEEE ICME Workshop 2022

Augmented-Training-Aware Bisenet for Real-Time Semantic Segmentation [PDF]

IEEE ICASSP 2022

DCSN: Deformable Convolutional Semantic Segmentation Neural Network for Non-Rigid Scenes [PDF]

These projects focus on stable, real-time semantic understanding for autonomous driving, balancing robustness and low-compute deployment.

Fake Image/Video (DeepFake) Detection (2018-)

Selected papers and outreach

IEEE ICIP 2019 and Applied Sciences

Detecting Generated Image Based on Coupled Network with Two-Step Pairwise Learning

IEEE IS3C 2018

Learning to Detect Fake Face Images in the Wild

[Project] [PDF] [GitHub] [Online Demo]

偽造 / 造假照片偵測,聚焦於可信媒體分析與打擊假照片、假新聞。

Deep Compressed Sensing for Hyperspectral Images (2020-)

Selected papers for efficient satellite sensing

IEEE Transactions on Geoscience and Remote Sensing

DCSN: Deep Compressed Sensing Network for Efficient Hyperspectral Data Transmission of Miniaturized Satellite [PDF]

CVGIP 2020

Deep Joint Compression and Super-Resolution Low-Rank Network for Fast Hyperspectral Data Transmission

以深度學習為基礎之高光譜 / 多光譜影像超解析度與壓縮感知技術開發。

Decision-Making of Autonomous Vehicles Using Vision Information (2019-)

Selected work on robust visual decision-making

Multimedia Tools and Applications

Deep Learning-based Vehicle Trajectory Prediction based on Generative Adversarial Network for Autonomous Driving Applications

IEEE ICCE-TW 2020

Learning to Predict Risky Driving Behaviors for Autonomous Driving

[Large-Scale Vehicle Collision Dataset @ TW] [Link]

自駕車視覺系統之危險駕駛行為預測與台灣道路地區資料庫建置。

Social Media Prediction (2016-)

Selected outputs and awards

- ACM Multimedia 2017-2020

- Social Media Prediction Based on Residual Learning and Random Forest (2017). See the publication list for newer versions.

- 2 Best-Performance Awards and 2 Top-Performance Awards

- Best Grand Challenge Paper Award (2017)

- [GitHub] [PDF]

預測社群貼文點擊率與長期流行度變化。

Image Deblocking and Super-Resolution (2013-2014)

Learning-Based Joint Super-Resolution and Deblocking for a Highly Compressed Image

Published in TMM 2015 and presented at MMSP 2013.

MMSP 2013 Top 10% Paper Award

[Project Page] [PDF] [Matlab Source Code (32-bit only)]

同時去除區塊效應並提高解析度,讓放大後的影像維持清晰。

Super-Resolution of Textured Video (2012-2014)

Temporally Coherent Super-Resolution of Textured Video via Dynamic Texture Synthesis

Published in IEEE Transactions on Image Processing (TIP) and presented at MMSP 2014.

[Project Page] [PDF] [Matlab Code]

提供動態紋理視訊的超解析度技術,改善放大後的細節與時間一致性。

Quality Assessment for Image Retargeting (2011-2013)

Objective Quality Assessment for Image Retargeting Based on Perceptual Geometric Distortion and Information Loss

Published in IEEE Journal of Selected Topics in Signal Processing and presented at VCIP 2013.

[Project Page] [PDF] [Matlab Code]

評估影像濃縮技術的品質,量化幾何失真與資訊流失。

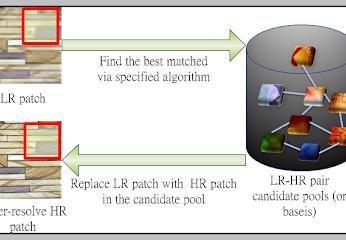

Super-Resolution (2010-2011)

Image Super-Resolution via Feature-Based Affine Transform

Presented at MMSP 2011.

[Project Page] [PDF] [Executable Code (Matlab)]

Note. We provide an implementation of NLM with the proposed method as an example.

影像超解析度技術依賴於資料庫,我們提出一種方法豐富資料庫的類型,提高放大的效果。

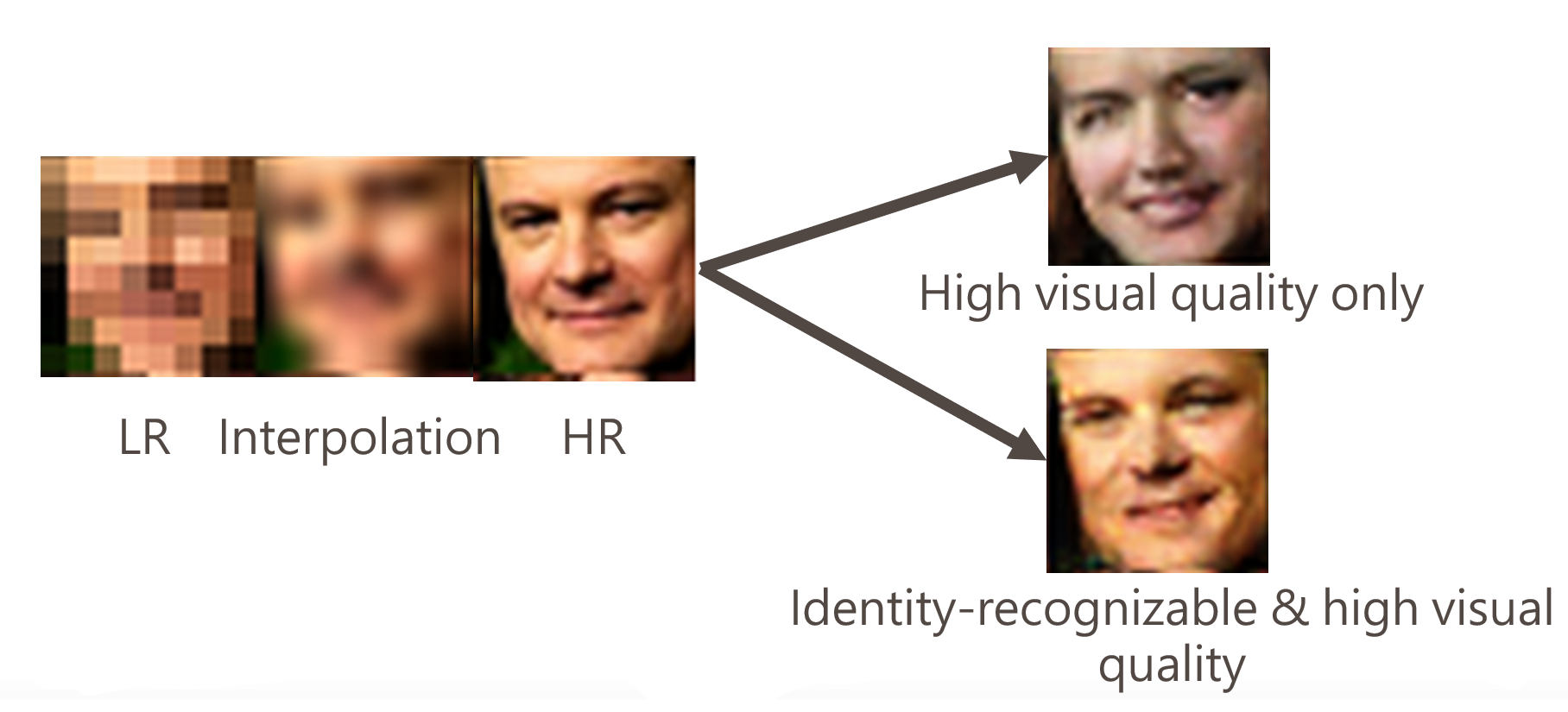

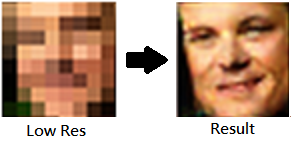

Face Hallucination (2008-2010)

Face Hallucination Using Bayesian Global Estimation and Local Basis Selection

Presented at MMSP 2010.

[Project Page] [PDF] [Matlab Code & Database]

人臉超解析度放大,從極低解析度人臉影像重建出較清晰的人臉結果。

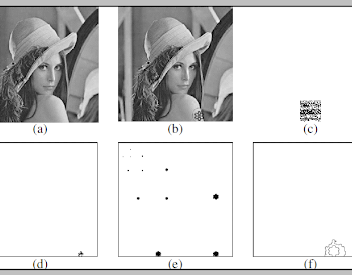

Video Forensics (2007-2008)

Video Forgery Detection Using the Correlation of Noise Residue

Presented at MMSP 2008.

Citations > 100

[PDF] [Matlab Code] [Database]

視訊鑑識技術,聚焦於影片偽造偵測與可信媒體分析。

Image Authentication (2006-2007)

Image Authentication and Tampering Localization Based on Watermark Embedding in the Wavelet Domain

Published in Optical Engineering.

[PDF] [Source Code]

將浮水印藏入影像中,並可耐受不同攻擊以進行影像認證與竄改定位。